OpenClaw: The Local AI Agent That Executes Tasks

Feb 24, 2026

A few weeks ago, a developer side project hit 100,000 GitHub stars and 2 million website visitors in a single week.

No ad campaign. No VC. No product launch event. Just a tool that felt genuinely different - and the internet noticed.

The project is called OpenClaw. Its public naming journey: Clawdbot first, then Moltbot (January 27, 2026), then OpenClaw (January 29, 2026) - after a trademark-related request from Anthropic. ("Clawd" appears in the project's orbit as an earlier internal name/mascot, but was not the publicly released project name.) The core idea has stayed the same since day one.

This is my attempt to explain what it actually is - not the hype version, not the fear version, just the real version.

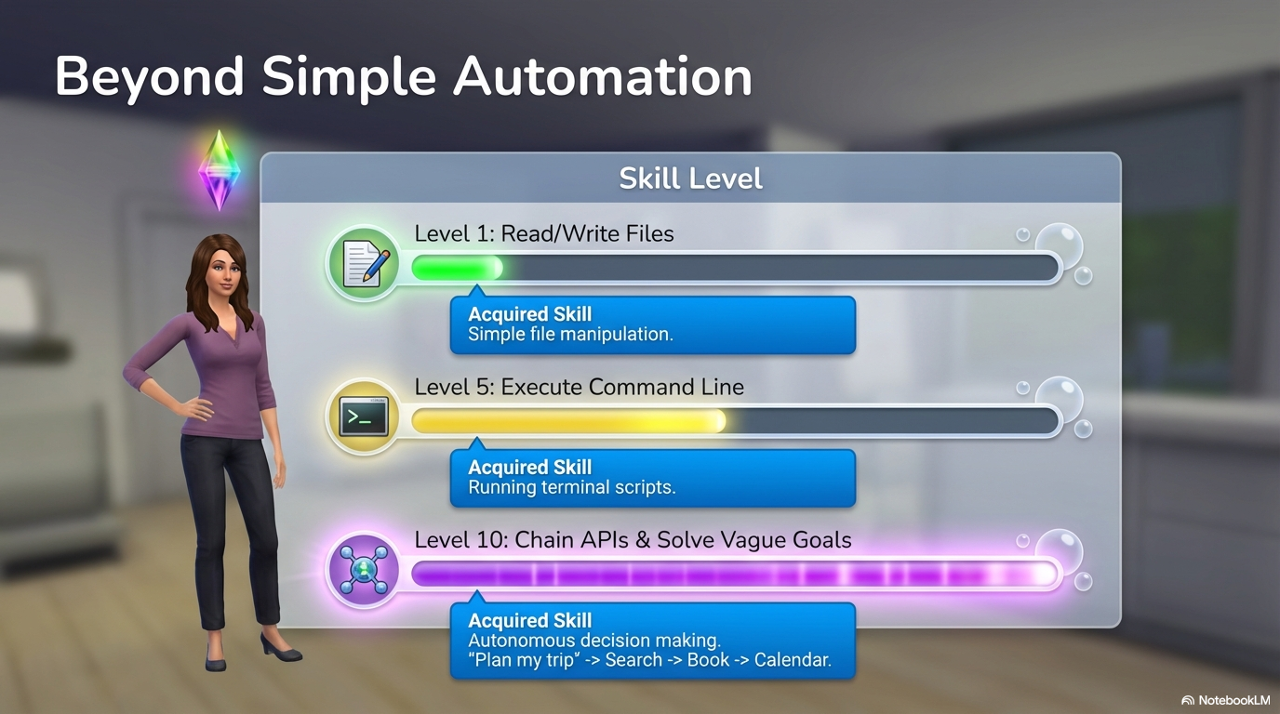

OpenClaw is a local-first AI agent that runs on your own computer. It connects to the messaging apps you already use and executes real tasks - files, commands, APIs - depending on which tools and permissions you enable.

What makes it different from ChatGPT or Claude?

This is the key question - but it can no longer be answered today with "chat vs. agent."

ChatGPT, Claude, and Gemini are long past being simple chat windows. With paid plans, desktop apps, and agent features, they can plan tasks, call tools, execute code, process files, and automate to a limited degree. You can configure your own agents, define workflows, and connect external contexts.

The difference is not what is fundamentally possible, but where it happens and under whose control.

With ChatGPT, Claude & Co., agent logic, tool execution, and context management all run centrally in the providers' cloud. Users control behavior and capabilities - but don't operate their own runtime environment. What the agent can do, how long it "lives," and which tools are available all remain tied to the product, subscription, and platform.

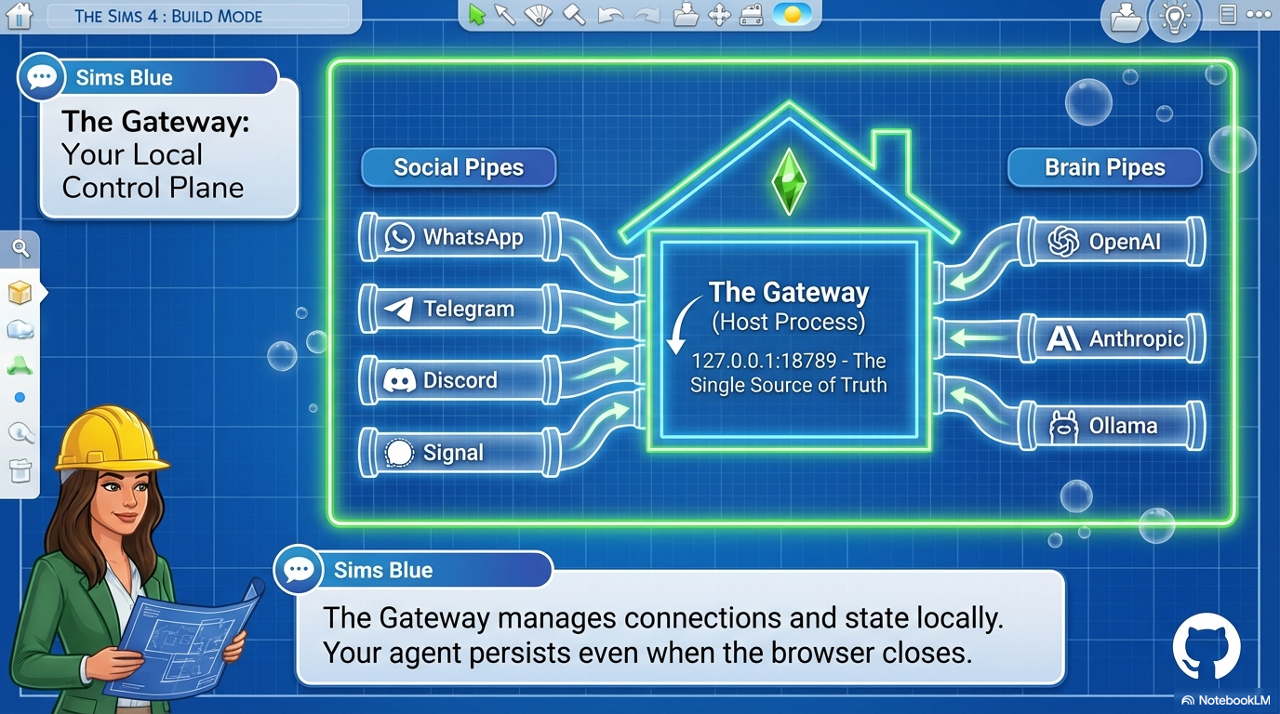

OpenClaw starts from a different place. It isn't a chat product with agent features - it's an agent runtime that runs locally or on your own infrastructure. The Gateway (control plane) runs on your own system, tools and skills do too - and the connected AI model is interchangeable. Whether a cloud model or a local one is used is the user's decision.

The real difference is therefore not capability, but operation and control: Who runs the agent? Where do the tools execute? Who controls permissions, data flows, and integrations?

This shift - from a configured cloud agent to a self-operated agent runtime - is the core of what OpenClaw is actually about.

What's new about this (and what isn't)

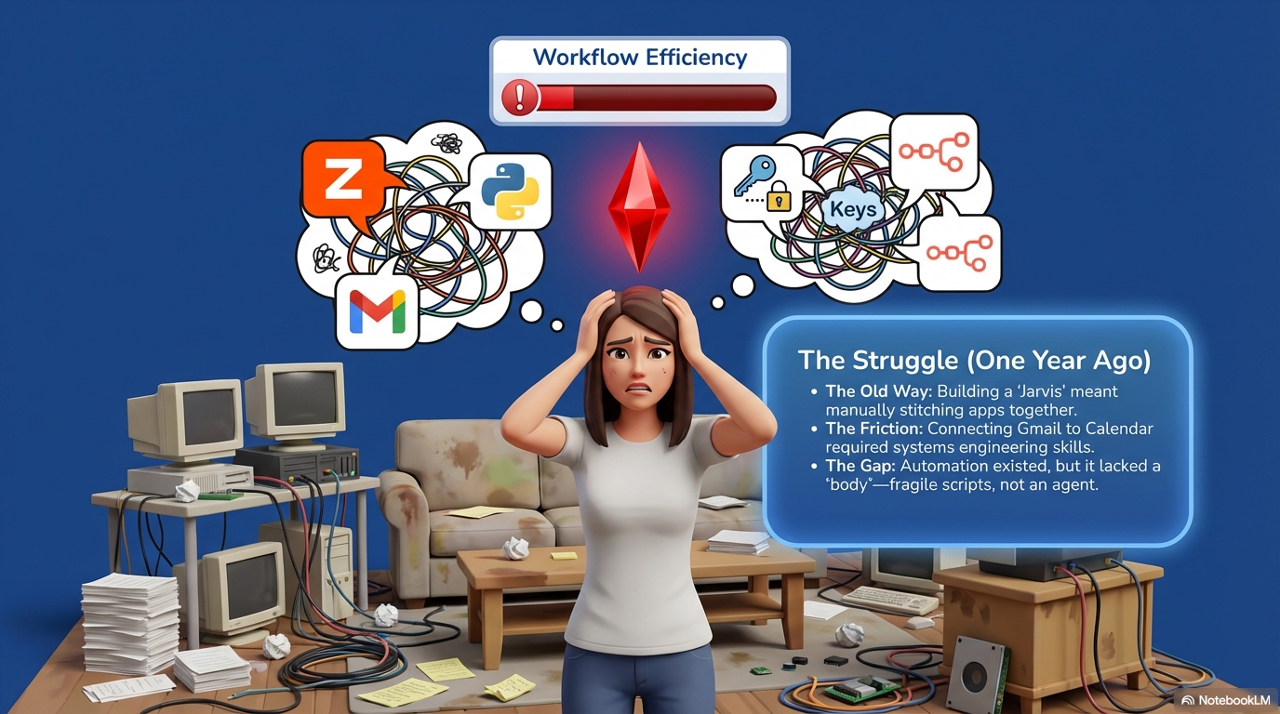

It's important to put this in context: much of what OpenClaw demonstrates was technically possible before. Open-source multi-agent frameworks for agent orchestration, tool-calling, local execution, and workflows have existed for years.

With Claude Code, Kiro, GitHub Copilot, or similar coding assistants, you can build prototypes, automations, and entire tools - including what is often described today as "vibecoding," which is increasingly moving in a specification-driven direction: "spec-driven development."

The difference is less whether it was possible, and more how accessible it was. OpenClaw has made these tools and technical possibilities visible, brought them together, and simplified many parts of the experience.

That doesn't change the fact that gaps remain, and that users still need technical understanding to assess risks, permissions, model backends, and skill code.

Nevertheless, OpenClaw has achieved something decisive: it has lowered the barrier to entry - not just for traditional developers, but also for people with technical understanding who previously had no direct access to locally hosted open-source frameworks and coding-assistant workflows.

Where did OpenClaw come from?

The creator is Peter Steinberger, an Austrian developer widely known in the iOS open-source community and as the founder of PSPDFKit (now Nutrient), one of the most-used PDF frameworks in the iOS ecosystem. He built it in late November 2025 as a weekend side project - originally a WhatsApp relay that connected a local AI to a messaging app.

The public naming journey: Clawdbot → Moltbot (January 27, 2026) → OpenClaw (January 29, 2026). The rename to Moltbot was associated with a trademark-related request from Anthropic - the exact details were not fully disclosed publicly. A community brainstorm followed, a lobster mascot became lore, and on January 29, 2026, it landed on OpenClaw.

On January 29, 2026, when he published the blog post "Introducing OpenClaw," the project had already crossed 100,000+ GitHub stars. A single week had brought 2 million visitors. By late February 2026: approximately 222,000 stars and 42,400+ forks - an unusual number for an open-source project at 90 days old.

The four building blocks

The Gateway is the control center - a long-running process on your machine (127.0.0.1:18789 by default) that connects the AI model to everything else. It manages your channel connections, maintains sessions, and tells the AI model in real time what it's currently capable of doing. Configuration and session state persist across restarts.

SKILLS are the knowledge extensions. From a community registry called ClawHub, you can install modules that teach the agent domain-specific behavior. Skills are also the primary supply-chain attack surface (see the security section below).

Channels are the connective tissue to the outside world. You don't interact through a new app. You message the agent through WhatsApp or Slack or iMessage - whatever you already use.

Tools are the literal hands. With your permission, the agent can execute shell commands, access your filesystem, call external APIs. The actual permissions vary by tool and configuration (host vs. sandbox vs. container).

The thing nobody says in the intro posts: security - three distinct risk classes

OpenClaw's own documentation calls its access levels "spicy." That's honest framing. But it's important to separate three risk classes that are frequently conflated:

1. Indirect prompt injection — Your agent processes content it reads from the world: emails, web pages, documents. An attacker can embed hidden instructions in that content. The agent reads them, interprets them as legitimate commands, and acts. This is a general, not-yet-solved problem in the field of AI agents, which OpenClaw explicitly acknowledges in its docs — without having a complete technical solution.

2. Exposed gateway / token theft (CVE class) - CVE-2026-25253, for example, involved token leakage through an automatically established WebSocket connection when a gatewayUrl parameter was accepted from a query. This is distinct from prompt injection: a misconfiguration/implementation vulnerability that can hand an attacker gateway administrator access - without any email, without social engineering. Multiple CVEs have been published for specific OpenClaw versions.

3. Skills supply-chain - Third-party skills on ClawHub are code. Over 400 malicious skills were reported. OpenClaw responded with a VirusTotal partnership to scan ClawHub submissions. This is the same supply-chain risk that exists for npm or PyPI - except these skills have access to your machine.

The team has responded on all three fronts: Gateway binds to localhost by default, connections require authentication tokens, there's a built-in security audit CLI with a --fix flag, and a formal security threat model has been published. The risks are real and distinct. It's worth knowing which one you're mitigating.

Who is this actually for right now?

Honestly: technical users and developers who understand what they're turning on - including which model backend they're configuring (and whether they're comfortable with that model receiving their data), and which skills they're installing.

This is not yet a "my grandparents can install it" product. But the direction is relevant for everyone who works with AI. We are moving from tools that explain things to tools that do things.

The OpenClaw Foundation was established in February 2026 to keep the project open, model-agnostic, and outside the control of any single company.

What's next

In the next posts I'll go deeper on:

- Why it really went viral - the actual mechanics behind 2 million visitors

- Moltbook - an AI-only social network independently built by someone else

- How local AI agents compare to cloud AI when it comes to your data

- Prompt injection explained for normal humans

- Open-source alternatives that do similar things